Deepfakes in Indian Elections: The Manipulated Videos You Probably Believed

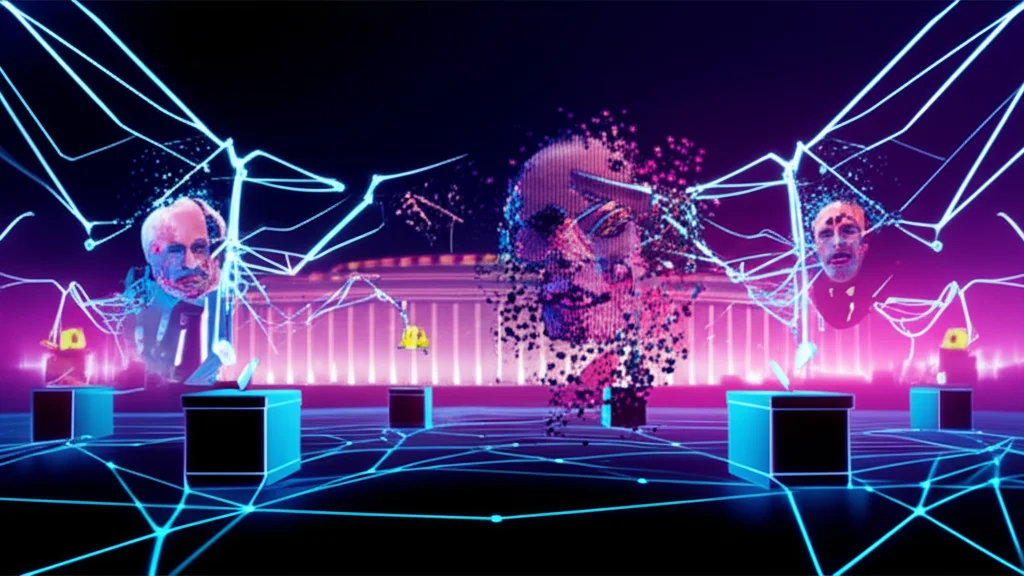

TL;DR: India's 2024 general election saw deepfakes go from fringe curiosity to mainstream political weapon. Over 75% of voters were exposed to AI-generated political content, both parties used it aggressively, and the legal framework is still playing catch-up. Here's what happened, how to spot fakes, and what's changed since.

The Dead Man Who Gave a Speech

In Tamil Nadu, the DMK party did something that would have been science fiction five years ago. They created an eight-minute video of former leader M. Karunanidhi, who died in 2018, delivering a speech praising his son M.K. Stalin. The AI-generated Karunanidhi looked into the camera, moved his lips in sync with the audio, and addressed party workers like he was still alive.

It wasn't hidden. The party put it out openly. And that's the thing about deepfakes in Indian politics: some are covert weapons designed to deceive, and others are proudly displayed campaign tools. The line between the two is where democracy gets messy.

When Fake Became Normal

India's 2024 Lok Sabha election wasn't the first time deepfakes showed up in Indian politics. That distinction goes to BJP MP Manoj Tiwari in 2020, who used AI to address Delhi voters in Haryanvi and English despite only recording the speech in Hindi. The lip-synced videos spread across 5,800 WhatsApp groups, reaching an estimated 15 million people.

But 2024 was a different scale entirely. The numbers are staggering:

| Metric | Figure |

|---|---|

| Voters exposed to political deepfakes | 75%+ |

| AI-generated voice clone calls (2 months pre-election) | 50 million+ |

| Estimated party spending on authorized AI content | $50 million |

| Voters who believed AI content was real | ~25% |

India topped the World Economic Forum's Global Risks Report 2024 as the country facing the highest misinformation risk in the world. With 535 million WhatsApp users and 467 million on YouTube, the distribution infrastructure for fake content was already built.

The Hit List: Real Cases That Went Viral

Both BJP and Congress weaponized deepfakes. Neither side has clean hands.

Congress's AI attacks on Modi: The party shared a parody video in February 2024 where Modi's face was superimposed on singer Justh's body in the viral song "Chor" (thief), with AI-cloned audio of Modi's voice paired with visuals of him alongside Gautam Adani. Congress also released deepfake videos of Ranveer Singh and Aamir Khan criticizing Modi, racking up half a million views before both actors filed police cases.

BJP's attacks on Rahul Gandhi: A video surfaced showing Gandhi's face superimposed on Bihar opposition leader Tejashwi Yadav, making it appear he was addressing Mamata Banerjee. Another clip used AI-generated audio to fabricate him resigning from the party.

The Kejriwal jail video: After Arvind Kejriwal's arrest in March 2024, a deepfake showed him singing "Bhula Dena Mujhe" from Aashiqui 2 inside jail, complete with a guitar. It spread through BJP-affiliated WhatsApp groups.

The Telangana confession: During November 2023 state elections, a seven-second deepfake showed KT Rama Rao calling on people to vote for Congress. Congress's official X account posted it. A party leader told Al Jazeera: "It was AI-generated though it looks completely real." Over 500,000 views.

Owaisi singing bhajans: AIMIM leader Asaduddin Owaisi was depicted in a deepfake singing devotional Hindu songs. The intent was clearly to mock him and provoke communal tension.

How Cheap and Easy It's Become

Creating a political deepfake used to require serious compute power. What cost $50,000 in cloud computing in 2023 now costs about $5. A full deepfake video runs around $1,500; an audio clone costs roughly $720.

The process is disturbingly simple. Creators record about 20 minutes of a target's voice, including singing to capture different phonemes and tones. They take pictures in front of a green screen, feed everything into AI models, and produce synthetic media that's increasingly difficult to distinguish from reality.

Voice cloning has become the bigger threat. Over 50 million AI-generated voice clone calls were made in the two months before the April 2024 elections. In Tamil Nadu alone, 250,000 personalized AI calls went out in the voice of a former chief minister. When your phone rings and a politician's voice asks for your vote, how do you know it's really them?

The BJP also used AI legitimately through Bhashini, a government-backed tool that translated Modi's speeches into Telugu, Tamil, Malayalam, Kannada, Odia, Bengali, Marathi, and Punjabi with AI voiceovers. Useful? Absolutely. But it blurs the line further between acceptable AI use and manipulation.

How to Spot a Deepfake (Practical Tools)

You don't need to be a tech expert. Here are real tools available to Indian voters:

WhatsApp Tipline (Deepfakes Analysis Unit): The Misinformation Combat Alliance launched a WhatsApp tipline at +91 9999025044 in March 2024. Forward suspicious audio or video, and a team of 12 fact-checking organizations (including BOOM Live, India Today, The Quint, Vishvas News) will analyze it. Available in English, Hindi, Tamil, and Telugu.

Fact-checking organizations to follow: - BOOM Live invested in Loccus, a professional audio deepfake detection tool - Logically Facts covers Indian political misinformation extensively - Vishvas News runs Hindi-language fact-checks - Factly focuses on Telugu and English verification

Free detection platforms: - TrueMedia.org integrates detection into web browsers - Northwestern University's GODDS (Global Online Deepfake Detection System) is free for journalists and the public

What to watch for yourself: - Unnatural blinking or eye movements - Lighting inconsistencies on the face vs. background - Audio that doesn't quite match lip movements (especially in Indian languages where lip-sync AI is less trained) - Skin texture that looks too smooth or waxy - Odd head movements or a "floating head" effect

India's Legal Response: Better Late Than Never

India has no dedicated deepfake law. But the regulatory framework has been tightening rapidly.

IT Rules Amendment (February 2026): The most recent and aggressive move. Platforms must now require users to declare content as AI-generated before publishing. All synthetic media needs clear disclosure labels with persistent metadata. Platforms that fail to comply risk losing their safe harbor protection under Section 79 of the IT Act. The takedown window for certain content dropped from 36 hours to 3 hours.

Election Commission guidelines: The ECI issued advisories in May 2024 requiring parties to remove deepfake posts within 3 hours during Model Code of Conduct periods. By October 2025, labels and watermarks must cover at least 10% of screen area for visuals, with creator identity embedded in metadata.

BNS 2023 (effective July 2024): The new criminal code includes Section 353 on misinformation threatening public order (up to 3 years imprisonment) and Section 356 specifically addressing synthetic media defamation.

DPDP Act 2023: Non-consensual deepfake use is classified as a data breach, with fines up to ₹250 crore (approximately $30 million).

Still, enforcement is the weak link. Former Chief Election Commissioner S.Y. Quraishi put it bluntly: "Even if one person is misled into believing something and that changes his mind, it vitiates the purity of the election process. Deepfakes have made the problem of rumour-mongering during the polls graver by a thousand times."

How India Compares Globally

India's situation is unique in several ways. The 2024 global election cycle saw AI-generated misinformation in dozens of countries, but the predicted tsunami never fully materialized in most places. India was the exception.

Slovakia saw an audio deepfake two days before their election that likely influenced the outcome. The US dealt with Biden robocalls that were quickly debunked. The EU passed its AI Act in August 2024.

But India's scale dwarfs everyone else: 50 million+ AI voice calls, 75% voter exposure, a $60 million deepfake industry that sprouted around the election cycle. On the flip side, India also built the world's first Deepfakes Analysis Unit and moved faster on platform regulations than most Western democracies.

What This Means for You

The next time a political video goes viral on your WhatsApp, pause before forwarding. The tools to verify it exist. The WhatsApp tipline is a text away. And the uncomfortable truth is that the party you support is probably producing deepfakes too.

Deepfakes aren't going away. They'll get cheaper, better, and harder to detect. The only real defense is a voter base that questions what it sees. That starts with you.

Sources

- GNET Research: Deep Fakes, Deeper Impacts

- Reuters Institute: AI deepfakes, bad laws, and a big fat Indian election

- World Economic Forum: How India is tackling deepfake misinformation

- Al Jazeera: Deepfake democracy in India's 2024 election

- MIT Technology Review: Indian politician using deepfakes to win voters

- Blackbird.AI: Indian voters inundated with deepfakes

- TechCrunch: India urges parties to avoid deepfakes

- NeGD: Deepfakes in India legal landscape

- Law.Asia: India tightens rules on deepfakes

- NPR: How deepfakes affected global elections in 2024

- Washington Post: AI deepfakes hitting elections

- Recorded Future: Political deepfakes report